Reimagining humanity and technology. Human and artificial intelligence. Mind and machine.

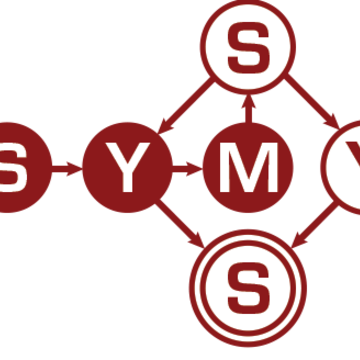

Symbolic Systems is a unique program for undergraduates and graduates that integrates knowledge from diverse fields of study including: Computer Science • Linguistics • Mathematics • Philosophy • Psychology • Statistics

What can you do with a SymSys major?

Practically anything. Invent. Research. Teach. Lead. With hands-on technical training and a deep understanding of how people think and communicate, your SymSys degree will help you stand out.